Data Visualization Translates Geyser Eruption Data Into Eerie Music

The project earned grad student Anna Barth a grand prize in the American Geophysical Union’s competition on Data Visualization and Storytelling.

Anna Barth, a graduate student at Columbia University’s Lamont-Doherty Earth Observatory, was recently named a grand prize winner in the American Geophysical Union’s competition on Data Visualization and Storytelling. She presented her project — which translates eruption data from the Lone Star geyser in Yellowstone National Park into sound and visuals — at the AGU meeting in San Francisco this week.

Barth is a fifth-year graduate student working with Professor Terry Plank to study volcanic eruptions, including the fluid dynamics of magma and the causes of explosive eruptions. Her award-winning project grew out of coursework for a data visualization and sonification class taught by geophysicist Ben Holtzman.

Although Barth and her classmates originally set out to create a visualization around predicting volcanic eruptions, there wasn’t enough open-access volcano monitoring data available. So they decided to focus on geysers instead. Geysers work similarly to volcanoes, but offer a few advantages to researchers. They erupt more frequently and regularly than volcanoes, offering more chances to collect data. “And you can get closer to them without worrying that you’re going to get killed or destroy your instruments,” says Barth.

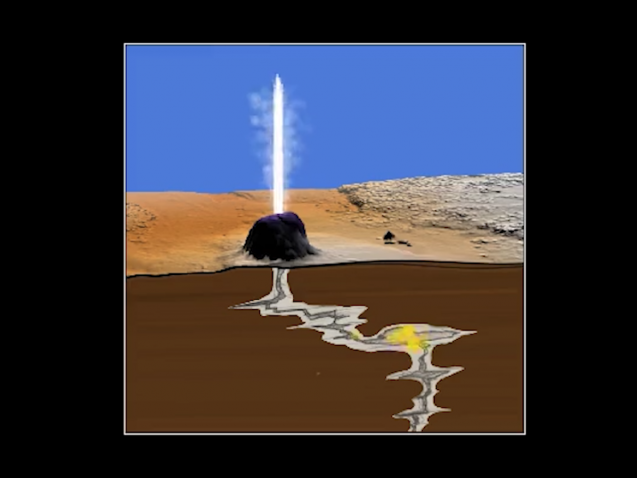

The Lone Star geyser erupts every three hours, on average, and each eruption consists of four distinct phases. Using data collected by the U.S. Geological Survey, Barth created a visualization that can help scientists to study these four phases in a new way, and look for patterns that could potentially enhance forecasts for volcanic eruptions.

To study the relationships between these different phases, scientists normally look at charts showing things like water discharge from the geyser, seismic activity, and deformation of the ground. They stack these charts on top of one another, with time on the horizontal axis and different variables on the vertical axes, and try to look for patterns across time and space.

“You have to kind of squint your eyes to see the relationships between them,” explains Barth. To get around this problem, the group started creating a data visualization that engages both the eyes and the ears. The resulting 4D visualization allows the eyes to pick out spatial patterns in the data, while the ears detect temporal patterns.

Barth has continued to work on the visualization project in the two years since taking Holtzman’s class. Now she’s writing up a paper about the results.

Lone Star Geyser, Yellowstone from seismicsoundlab on Vimeo.

In her presentation at AGU (shown above), Barth simulates three types of data from the Lone Star geyser. Water spurts sound like high-pitched, flutey bleeps; seismic tremors sizzle; and the deformation of the ground is reminiscent of the lower end a pipe organ. Corresponding visuals appear at the same time.

The visualization illustrates the four phases of the geyser’s eruption cycle:

- Pre-play – the sporadic spurts of hot water that precede an eruption;

- Eruption – when vigorous jets of water and steam burst out for periods of about 20 minutes;

- Post-eruption relaxation, characterized by steam venting and high frequency seismic signals;

- Conduit refilling, where the ground slowly deforms around the vent, without surface discharge or significant seismic activity.

Barth’s visualization explores the similarities and differences in these phases from three eruptions in 2010 compared to three more in 2014. The comparison raises several questions. For one, it shows that the timing of the pre-play stage varies a lot between different cycles — how, then, do the eruptions continue to work like clockwork? What happened to delay the third eruption in 2010? And what is the causal relationship between seismic tremor and eruptions?

Although the movie is just a proof-of-concept for now, Barth hopes that the richer sensory experience will help to reveal new patterns in the data, and that it will allow even non-scientists to bring new questions and observations to light.

After she earns her PhD, Barth plans to continue developing her data visualization and sonification skills with Holtzman and Leif Karlstrom of the University of Oregon. She’ll explore a new dataset from Hawaii’s 2018 Kilauea eruption, and use visualization and sonification to find new scientific questions to work on.